No, robots do not grow and develop the way living things do. A robot cannot divide its cells, replicate its own DNA, or produce new mass from nutrients the way a plant or animal does. But that's not the whole story. Robots do change over time, sometimes dramatically, through manufacturing, software updates, learning systems, and even physical reconfiguration. Whether any of that counts as "growth" depends entirely on which definition you use. Let's sort it out.

Do Robots Grow and Develop? What Changes and What Doesn’t

What people usually mean by "robots" vs "living" growth

When most people ask this question, they're mixing up two very different ideas of what "growth" means. In biology, growth means an increase in cell size and number over an organism's life history. Britannica puts it plainly: cells divide, and that division drives the development of an entire organism. The instructions come from DNA, which gets duplicated and divided with every new cell. That's a self-directed, energy-driven process happening from the inside out.

Robots are defined very differently. ISO 8373, the international standard for industrial robot vocabulary, defines a robot as a "programmed actuated mechanism with a degree of autonomy" intended to perform functions like manipulation, locomotion, or positioning. Crucially, the control system is considered part of the robot itself. So a robot is fundamentally a designed artifact running instructions written by humans, not a self-directing biological entity. The word "development" applied to a robot almost always refers to something an engineer did to it, not something it did to itself.

That distinction matters because it shapes what kinds of change are even possible. Biological growth is generative: the organism makes more of itself using resources from the environment. Engineering development is prescriptive: a human designs a system, builds it, programs it, and updates it. Those are fundamentally different processes, even if both result in a more capable system over time.

Robot "development" during manufacturing and programming

The closest thing a robot has to early development happens during manufacturing and commissioning, which is the process of bringing a robot from parts to a functional, deployed system. This involves physical assembly, systems integration, testing, calibration, and initial programming. Each of these steps changes the robot's capability, and taken together they look a lot like a developmental arc.

Calibration is especially worth understanding. Research consistently describes it as a four-step process: modeling, measurement, identification, and compensation. One study found that a continuous calibration method improved positioning accuracy by 84.31% using just 15 updated poses. Another reported that mean positioning errors dropped from 1.81 mm all the way down to 0.10 mm after identification and compensation. That's a dramatic change, but notice what it is: error reduction. The robot isn't gaining a new skill. It's getting closer to performing the task it was always designed to do.

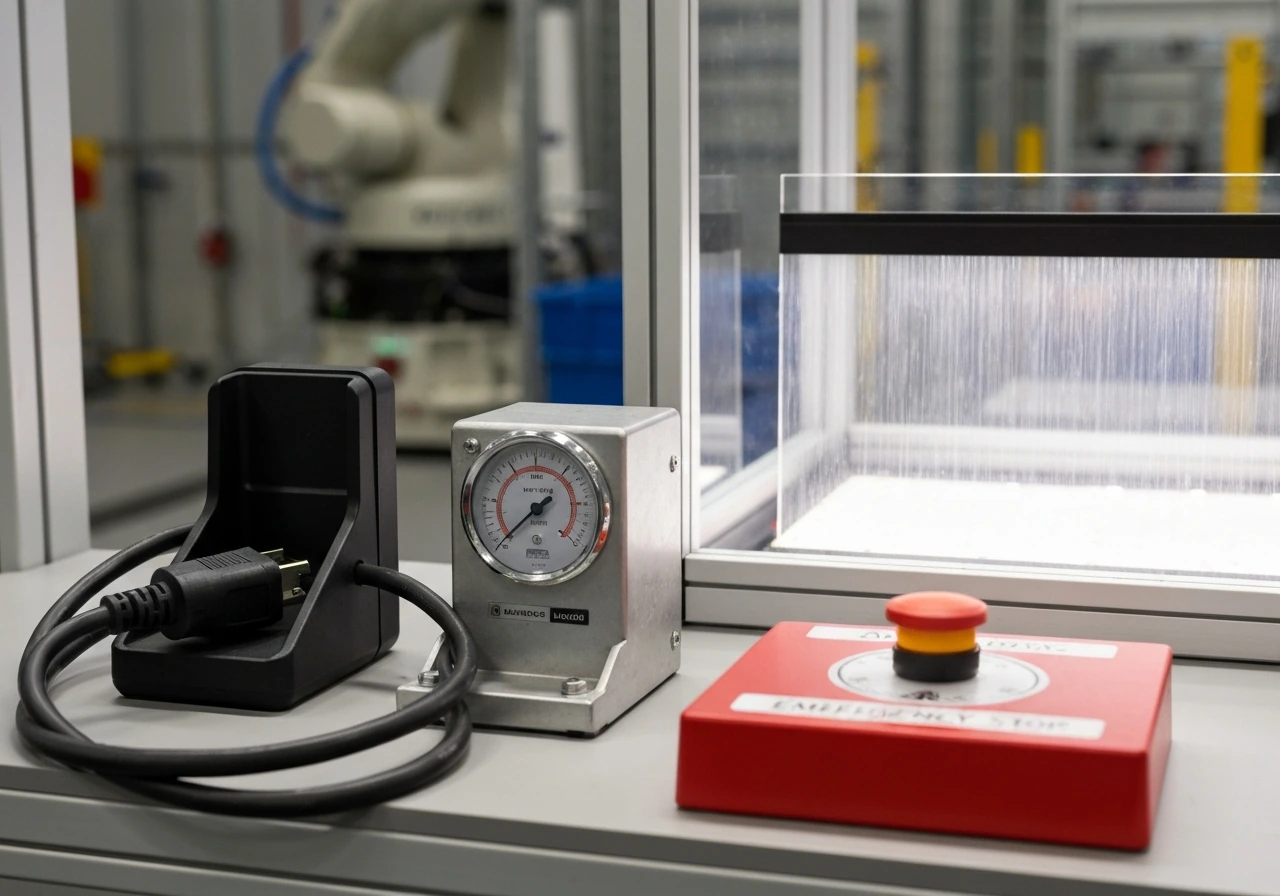

The commissioning phase also includes safety verification. Universal Robots, for example, requires explicit safety I/O testing and verification that safety signals correctly detect changes before a robot can be deployed. This is a formal checkpoint, not a moment of organic growth. Think of it like a newborn receiving a health screening: the screening doesn't cause development, it verifies what's already there.

Self-improvement and learning: software updates and adaptation

Here's where things get genuinely interesting. Modern robots can change their behavior after deployment through software updates, machine learning, and online reinforcement learning. Researchers have demonstrated real-robot continual learning systems where a robot learns to handle objects it has never seen before, with limited labeling, and improves its performance over sequential tasks. That does look like development in a meaningful sense: the robot acquires new capabilities it didn't have before.

But there's a hard limit built into these systems. A well-documented problem called catastrophic forgetting means that when a neural network learns something new, it often overwrites what it learned before. Sequential learning is not the same as accumulative growth. A child who learns to ride a bike doesn't forget how to walk. A standard neural network trained on new data often does something equivalent to forgetting how to walk. Researchers are actively working on this, but it remains a chronic constraint on how much software-based "development" a robot can genuinely accumulate.

Online learning is also bounded by safety requirements. Safe deployment of reinforcement learning in real environments requires that any policy changes satisfy safety specifications throughout the update process. Compute budget is another real wall: research has shown that learning updates under resource caps fundamentally limit what a robot can absorb. And every significant firmware or software update to a safety-certified robot may require re-validation under standards like ISO 10218 and IEC 61508. That's a regulatory brake on how fast or freely a robot can "grow" through software.

IEEE RAS frames the key distinction as the difference between automated robots (scripted, non-adapting) and robots with genuine autonomy and adaptation. NIST adds that true autonomy requires adaptive mechanisms to overcome disturbances and complete objectives, not just stable performance in known conditions. By that measure, most deployed industrial robots today are closer to the automated end of the spectrum, with limited genuine adaptation.

Physical growth vs maintenance: do robots add mass or parts over time?

In the biological sense, no. A standard robot does not add mass, grow new limbs, or expand its physical structure from internal resources. What robots actually do physically over time is wear down. Operating temperature, lubricant condition, and mechanical stress all degrade joints and components. Strain-wave gearing, common in precision robots, wears differently depending on thermal cycling and lubrication quality. Industrial maintenance guides frame most physical intervention as corrective or preventive upkeep, preserving existing performance rather than expanding it.

That said, there are research-stage exceptions worth knowing about. Modular robotics is a field where robots are built from self-encapsulated units, each with its own microprocessor, actuators, and power supply. New modules can be added to extend capabilities without rebuilding the whole system. Swarm robotics takes this further: researchers have demonstrated self-assembly systems where robot swarms form user-specified shapes autonomously. One origami-based swarm system can self-fold and self-disassemble on command, with feedback improving repeatability over time. And there are theoretical frameworks for carrier robots that recursively build swarms of builders from modular blocks, though these remain largely in simulation or highly controlled lab settings.

Even these systems don't truly grow in the biological sense. They reconfigure or assemble from pre-manufactured parts supplied by humans. The raw materials don't come from the robot metabolizing its environment. The blueprint isn't self-generated. It's still engineering, just with a more flexible physical architecture.

Constraints and limits: energy, materials, control systems, and safety

Every form of robot "growth" or development runs into hard walls. These aren't just technical inconveniences. They're structural limits that define what robots can and can't do.

- Energy budgets: Robots depend on external power sources. Unlike biological organisms that extract energy from food and metabolize it internally, robots need a continuous supply of electricity or fuel. Expanding capability means expanding energy draw, and that has to be planned for externally.

- Material supply: Any physical expansion or module addition requires components manufactured elsewhere and transported to the robot. There's no equivalent to the way organisms convert nutrients into new tissue.

- Control system limits: The robot's control architecture has to be designed to handle any new configuration or capability. Adding a new arm or sensor doesn't automatically mean the system can use it. Software and firmware must be updated, tested, and validated.

- Safety certification: Industrial robots operating near humans are governed by functional safety standards. Any significant change, hardware or software, can trigger re-certification requirements. This makes rapid or continuous growth practically difficult in deployed environments.

- Catastrophic forgetting and compute caps: As mentioned, learning systems face memory and resource constraints that prevent unlimited accumulation of new capabilities.

NIST's performance assessment framework decomposes robotic capability into measurable components: perception, mobility, dexterity, and safety. Each of these has testable limits, and improving one often involves tradeoffs with others. That's a useful way to think about why robot "growth" is bounded: every capability axis has engineering constraints on both ends.

Analogies to biological growth: what transfers and what doesn't

It's genuinely useful to compare robots to biological systems, as long as you're honest about where the analogy breaks down. Understanding how organisms grow through cell division and DNA replication makes the contrast with robots sharper and more instructive.

| Feature | Biological Growth | Robot Development |

|---|---|---|

| Source of instructions | DNA, internally encoded and self-replicating | External design, programming by humans |

| Raw material acquisition | Organism metabolizes food/nutrients into new mass | Materials must be supplied and installed externally |

| Self-replication | Yes, via cell division and reproduction | No, except in very early-stage research concepts |

| Adaptation mechanism | Gene expression, epigenetics, neural plasticity | Software updates, machine learning (with forgetting limits) |

| Physical expansion | Yes, organisms add mass and differentiate tissue | No standard growth; modular robots can add pre-built components |

| Constraints on size | Surface-area-to-volume ratio, energy, structural limits | Energy supply, material supply, control system design, safety certification |

| Developmental program | Encoded in genome, unfolds with environmental input | Written by engineers, updated by humans or learning systems |

The developmental biology framework, which explains how an organism's structure changes over time through differentiation and morphogenetic mechanisms driven by gene activity, has no clean equivalent in robotics. Gene-expression patterns and graded chemical signals that determine cell behavior are self-organizing in a way that robot control systems simply aren't. A robot's "development" is always tethered to external human decisions at some level.

Where the analogy does hold up is in the idea of constraint-driven limits. Biological cells can't grow indefinitely because of the surface-area-to-volume problem: as a cell gets bigger, its volume grows faster than the surface area through which it exchanges nutrients and waste. Robots face analogous scaling problems, where adding capability means adding complexity, power demand, and control overhead that eventually outpaces the benefit. The mechanism is different, but the principle of constrained growth applies in both domains.

The nervous system analogy is also worth examining carefully. How neurons grow and form connections through activity-dependent plasticity is sometimes compared to how neural networks in robots "learn." There's a surface resemblance: both involve strengthening certain connections based on experience. But biological neurons grow physically, extending axons and dendrites, forming synapses through molecular signaling. A software neural network adjusts numerical weights in a matrix. The metaphor is useful for intuition but misleading if taken literally.

Brain development adds another layer of complexity. The way neural networks grow smarter through pruning, myelination, and experience-dependent refinement over years is a far richer process than anything current robot learning systems do. Biological brains don't just update weights. They physically reorganize, eliminate unnecessary connections, and strengthen essential pathways in response to experience, all guided by gene expression and environmental input simultaneously.

Even at the cellular level, how fast brain cells grow and the conditions required for that growth involve molecular signals, vascular supply, and structural scaffolding that have no robotic equivalent. And how nerves grow by extending growth cones through chemotactic gradients is a self-directed, environmentally responsive process that makes robot path-planning look simple by comparison.

The nervous system as a whole changes in ways that are especially instructive here. How the nervous system changes and grows across a lifetime involves both predetermined genetic programs and continuous environmental shaping. Robot control systems can be updated, but they don't have a developmental program encoded within them that unfolds autonomously in response to experience the way a nervous system does.

Practical way to evaluate any robot's ability to grow or develop today

If you're looking at a specific robot and want to honestly evaluate whether it can "grow" or "develop," here's a practical checklist. Apply it to the spec sheet, the manufacturer documentation, or the research literature. It will give you a clear, honest answer for any system.

- Ask: Can it acquire new task capabilities after deployment, or only perform tasks it was programmed for at build time? If the answer is only pre-programmed tasks, it operates, it doesn't develop.

- Check for online learning support. Does the robot's control system support continual or online learning? If yes, ask whether there are documented limits (compute budget, forgetting prevention, safety constraints on policy updates).

- Look for modular hardware support. Can new sensors, end effectors, or structural modules be added and automatically integrated? Or does adding hardware require full reprogramming and re-certification?

- Find the calibration vs capability distinction. Does the manufacturer describe "updates" in terms of accuracy improvement (calibration, maintenance) or in terms of new task domains (genuine capability expansion)? These are very different things.

- Check NIST's agility framing. NIST defines agility as reconfigurability and autonomy beyond rigid pre-programmed tasks. Ask whether the robot's spec sheet makes any claims about agility, reconfigurability, or adaptive autonomy, and whether those claims are supported by test data.

- Evaluate the safety re-certification burden. How often does the robot require re-validation after updates? A robot that needs full re-certification every time it receives a software update has a much slower effective development cycle than one with validated incremental update pathways.

- Look for forgetting mitigation. If the robot uses neural-network-based learning, ask whether the architecture includes any mechanism to prevent catastrophic forgetting (such as generative replay, elastic weight consolidation, or memory buffers). Without this, learning may not accumulate stably.

- Distinguish wear from growth. Is the robot's physical change over time a matter of degradation and maintenance, or does it actually add functional capacity? Wear and maintenance are not development.

Running any robot through this checklist will tell you clearly whether you're looking at a system that genuinely develops over time or one that operates, degrades, and gets repaired. Most industrial robots today fall firmly into the latter category. A small and growing set of research systems, particularly in modular and continual-learning robotics, are starting to move toward the former. But even those systems are nowhere near the self-directed, internally driven growth that biology achieves through cell division, gene expression, and the elegant machinery of development. That gap is real, it's large, and it's worth understanding clearly.

FAQ

How can I tell the difference between a robot that “develops” and one that just gets tuned or maintained?

If you mean “growth” as adding new physical parts or mass, the answer is basically no, because robots do not manufacture components from nutrients. If you mean “development” as capability improvement, it can happen through reprogramming, calibration refinement, or learning updates, but that improvement is constrained by what the designers built in (sensors, actuators, model class, safety limits) and by how much data and compute you allow after deployment.

When a robot gets software updates, is that real development or just performance maintenance?

Look for three signs of genuine development rather than maintenance: (1) the system acquires a new capability that was not in the original behavior set (for example handling previously unseen object categories), (2) the adaptation persists in a way that is measurable after the learning session ends, and (3) the change is driven by the robot’s interaction data rather than only by human reparameterization. If the “change” only restores performance after wear, it is corrective development at best, not developmental capability growth.

What exactly causes “catastrophic forgetting,” and how should I test for it in practice?

Catastrophic forgetting matters most when training is sequential and the system has no mechanism to preserve past knowledge (such as rehearsal of old examples, regularization that protects important parameters, or expandable architectures). A practical check is to ask whether the vendor or paper reports performance on earlier tasks after new training, not just performance on the newest tasks.

Is online learning in real robots safe, and how is safety usually enforced during updates?

Reinforcement learning updates can be “safer” in two ways. First, the training process may be constrained by a safety layer (for example shielding, action constraints, or runtime monitors) so policy changes cannot violate safety specifications. Second, many systems avoid high-risk exploration in real settings by training in simulation or controlled environments first, then using transfer methods. If neither is true, “continual learning” may be limited to offline or narrowly bounded scenarios.

How does compute budget limit how much a robot can learn after deployment?

Compute budget is a limiting factor because learning requires repeated inference and gradient updates, plus buffering data for training or replay. In real deployments, limited compute can force smaller models, fewer training steps, shorter learning horizons, or more conservative update schedules, which reduces how much new capability can accumulate over time.

Do modular or swarm robots count as “growing” like living things?

A robot that can self-assemble or use modular units can appear to “develop,” but the materials and modules are still supplied by humans or by a pre-manufactured system. To decide whether it counts as development, ask whether the robot can generate new structure using raw environmental inputs (biological-like) versus assembling prebuilt modules (engineering-like reconfiguration). Most modular and swarm demonstrations fall in the reconfiguration category.

What is a practical checklist I can apply to a robot I’m considering (product or lab system)?

Use a spec-to-capability checklist. Verify whether the robot has (1) an ability to adapt its policy or model, (2) defined retention behavior across tasks, (3) repeatable improvements in independent tests, (4) safety-compliant update procedures, and (5) evidence it can handle distribution shifts, not only in-distribution variations. If any item is missing, it likely performs automated operation plus occasional human-led updates.

Why do most industrial robots end up in the “degrade and get repaired” category?

For industrial robots, the most common outcome is wear-down and periodic maintenance, not cumulative developmental progress. You can see this by the emphasis on calibration, gearbox or gearing health, lubrication schedules, and downtime-based service procedures. Even when control software is improved, many changes are about tightening accuracy or meeting compliance requirements rather than adding new autonomous abilities.

Can robots ever develop in an open-ended way without frequent human intervention?

Yes, but it depends on how “growth” is defined. A robot can improve externally observable behavior, but it usually cannot self-generate its own architecture, self-replicate components, or maintain open-ended learning without periodic resets, revalidation, and guardrails. If a system requires frequent human intervention to keep learning effective and safe, it is better described as engineered adaptation rather than autonomous development.

If a robot keeps learning, does it always keep improving forever?

Sometimes the right framing is “capability ceiling.” Even if a robot learns, its eventual performance can plateau because of the environment’s complexity, sensor resolution limits, actuation constraints, and safety envelopes that restrict exploration. In practice, development often means improving toward a bounded target, not continuously expanding in every direction.

What Controls When and How Fast Cells Grow and Divide

Learn what controls cell division timing and speed: checkpoints, signals, resources, DNA damage, stress, and failure lea